Backup Proxmox with Pulsed Media Storage Box

A Pulsed Media storage box is built for offsite backup. This page shows five working ways to point a Proxmox VE host or a Proxmox Backup Server at one, ranked from simple to advanced. Pick the path that matches what you already have.

If you don't have a storage box yet, see the storage box plans. The M-series tiers run RAID5 and are the better fit for backup targets where redundancy matters.

Pick a method

| Method | What it gives you | When to pick it |

|---|---|---|

| 1. BorgBackup over SFTP | Encrypted, deduplicated backups, tiny incrementals, easy restore | You want the simplest path that just works. BorgBackup is pre-installed on the storage box. |

| 2. Restic over SFTP | Same as Borg, different tool, slightly different feature set | You already use Restic elsewhere or prefer its tags and snapshot model. |

| 3. rclone | Mount the storage box as a local directory, or sync to it | You want a pluggable filesystem layer, or you already use rclone for cloud tiering. |

| 4. PBS local + offsite rsync | Keep PBS where it works (local ext4/XFS), copy the chunk store to the storage box every night | You already run PBS on your own hardware and want a cheap offsite copy as the third leg of 3-2-1 backup. |

| 5. S3 backend (rclone serve s3 or MinIO) | PBS native S3 datastore (PBS 4.0+), full PBS feature set against the storage box | You want PBS chunk-level dedup with the storage box behind it. Heaviest setup. Requires PBS 4.0 or newer on your PBS host. |

If you don't already run PBS, methods 1 or 2 are usually what you want. PBS is a separate piece of software you'd run on your own machine. Methods 4 and 5 only make sense once that's already in place.

Before you start

Every method below assumes:

- An active Pulsed Media storage box. Any tier works for methods 1 to 4. Method 5 needs a tier with enough RAM for Docker rootless.

- SSH key access to the storage box. If you haven't set this up yet, see Seedbox access via FTP, SSH and SFTP.

- Enough free quota for what you're backing up. Your first backup transfers close to the full size of your source data. Pre-compressed VM dumps (.vma, .tar.zst) gain little from Borg or Restic compression, so plan for at least 1× your largest backup set, plus headroom for the number of snapshots you intend to keep.

The examples below use these placeholders. Replace with your own values:

USERNAME— your storage box usernameSERVER.pulsedmedia.com— the FQDN you got at signupPORT— your SSH port (22 by default; check your welcome email if it differs)

Method 1: BorgBackup over SFTP

BorgBackup is the recommended path. It's pre-installed on every storage box. Backups are encrypted on the client before they leave your machine. Deduplication is aggressive enough that incrementals drop to a few KB even when the source data is hundreds of GB.

Expect the first backup to take a while. Over a typical European broadband link, 100 GB transfers in a few hours. Subsequent runs are minutes. Schedule the first run during off-peak hours so you don't compete with whatever else is using your bandwidth.

Install BorgBackup on your Proxmox host

apt update && apt install -y borgbackup

Initialize the repo

export BORG_PASSPHRASE='your-strong-passphrase' borg init --encryption=repokey ssh://USERNAME@SERVER.pulsedmedia.com:PORT/~/borg-repo

The first run sets the encryption key and asks once for the passphrase. After that, the passphrase comes from the environment variable.

Back up a directory

borg create --stats --progress \

"ssh://USERNAME@SERVER.pulsedmedia.com:PORT/~/borg-repo::$(date +%Y-%m-%dT%H:%M)" \

/etc /var/lib/vz/dump

After the first full backup, subsequent runs only transfer changed chunks. In our test the second run with one modified file moved 1.6 KiB out of 12 MiB total. The ratio improves as the repo accumulates chunks from stable data.

Schedule it

Don't put the passphrase directly in the cron command. The shorter alternative below leaks the passphrase to anyone who can read /proc/<pid>/environ while Borg is running. Use a passphrase file instead:

# One-time setup on the Proxmox host echo 'your-strong-passphrase' > /root/.borg-pass chmod 600 /root/.borg-pass chown root:root /root/.borg-pass

Then the cron entry reads from that file:

0 2 * * * root BORG_PASSCOMMAND='cat /root/.borg-pass' \

borg create --stats \

"ssh://USERNAME@SERVER.pulsedmedia.com:PORT/~/borg-repo::$(date +\%Y-\%m-\%d)" \

/etc /var/lib/vz/dump >> /var/log/borg.log 2>&1

BORG_PASSCOMMAND reads the passphrase from a restricted file at runtime. The passphrase never appears in the process environment or the cron command line.

Restore

borg list ssh://USERNAME@SERVER.pulsedmedia.com:PORT/~/borg-repo borg extract ssh://USERNAME@SERVER.pulsedmedia.com:PORT/~/borg-repo::ARCHIVE_NAME

For VM-level restores, run vzdump first to produce a single backup file in /var/lib/vz/dump/, then let Borg back that up. PVE can restore the VMA file directly through the GUI once you bring it back.

Method 2: Restic over SFTP

Restic is also pre-installed on the storage box. Setup is almost identical to Borg with different command names.

Install on your Proxmox host

apt update && apt install -y restic

Initialize

export RESTIC_PASSWORD='your-strong-passphrase' export RESTIC_REPOSITORY='sftp:USERNAME@SERVER.pulsedmedia.com:/home/USERNAME/restic-repo' restic init

Back up

restic backup /etc /var/lib/vz/dump --tag proxmox

List and restore

restic snapshots restic restore latest --target /tmp/restore --include /etc

Restic and Borg both work. Pick one and stick with it. The repo formats are incompatible.

Method 3: rclone

Rclone is the right tool when you want the storage box to look like a local filesystem, or when you're already using rclone for tiered storage between a seedbox and a storage box.

Configure the SFTP remote

Run rclone config on the Proxmox host and follow the prompts, or paste this directly into ~/.config/rclone/rclone.conf:

[pmsb] type = sftp host = SERVER.pulsedmedia.com port = PORT user = USERNAME key_file = ~/.ssh/id_ed25519 shell_type = unix md5sum_command = md5sum sha1sum_command = sha1sum

Test it:

rclone lsd pmsb:

Sync (recommended for backups)

rclone sync /var/lib/vz/dump pmsb:proxmox-dumps --progress

rclone sync is more reliable than mount for backup workflows. It runs to completion, verifies, and finishes.

Mount (for browse/edit, not backup pipelines)

mkdir -p /mnt/pmsb

rclone mount pmsb: /mnt/pmsb \

--vfs-cache-mode full \

--vfs-cache-max-age 24h \

--vfs-write-back 5s \

--daemon

ls /mnt/pmsb/

The VFS cache modes matter. Without --vfs-cache-mode full, writes can show transient I/O errors before the cache flushes. Don't run a backup tool against an rclone mount — use rclone sync for that.

To unmount:

fusermount -u /mnt/pmsb

Method 4: PBS local + offsite rsync

If you already run Proxmox Backup Server on local hardware, the storage box becomes the cheap offsite leg. PBS keeps its chunk store on local ext4/XFS where it works correctly. rsync ships a copy to the storage box every night.

This is a cold offsite copy, not a warm second PBS. Restoring goes: rsync the chunks back to a real PBS host first, then restore from that PBS. The storage box is the safety net, not a live target.

A PBS instance other than the one that wrote the chunks may not recognize the synced datastore without manual re-registration. Test a restore to a spare PBS before you need it under pressure — a backup you have never restored is not a backup.

Schedule the rsync

On the PBS host:

# /etc/cron.d/pbs-offsite-rsync

0 2 * * * root rsync -aAX --delete-after \

/var/lib/proxmox-backup/datastore/main/ \

USERNAME@SERVER.pulsedmedia.com:~/pbs-datastore-offsite/ \

>> /var/log/pbs-offsite-rsync.log 2>&1

The --delete-after flag mirrors PBS prune operations to the storage box, so old chunks removed by PBS retention also disappear from the offsite copy. If you want a longer retention on the storage box than on the local PBS, drop --delete-after and clean manually.

Why not just point PBS at the storage box directly

PBS datastores require ext4, XFS, or ZFS. Network filesystems, FUSE, and SFTP are not supported. PBS chunk operations need atomic rename() and atime updates that network mounts don't reliably provide. Method 4 sidesteps this by keeping PBS on its native filesystem and treating the storage box as a backup of a backup.

Method 5: S3 backend for PBS

PBS 4.0 (released August 2025) added native S3-compatible object storage as a datastore backend. The storage box doesn't run S3 out of the box, but you can put an S3 endpoint on top of it in two ways: a single rclone serve s3 command, or a MinIO container.

Option 5a: rclone serve s3 (lightweight)

The simplest path. Requires rclone 1.65 or newer.

Check what's installed first:

rclone version

If you're below 1.65, grab the latest binary from rclone.org/downloads and drop it into ~/bin/.

On the storage box, in a tmux session so it survives disconnect:

tmux new -s rclone-s3

rclone serve s3 --addr 0.0.0.0:9000 \

--auth-key 'YOUR-ACCESS-KEY,YOUR-SECRET-KEY' \

/home/USERNAME/s3-bucket

# Detach: Ctrl-b then d

Option 5b: MinIO via Docker rootless (more featured)

MinIO gives you a web console on port 9001, bucket policies, versioning, and metrics. It uses more RAM than rclone serve.

On the storage box (with Rootless Docker enabled):

docker run -d --name minio --restart unless-stopped \

-p 9000:9000 -p 9001:9001 \

-e MINIO_ROOT_USER=YOUR-ACCESS-KEY \

-e MINIO_ROOT_PASSWORD=YOUR-SECRET-KEY \

-v /home/USERNAME/minio-data:/data \

minio/minio:latest server /data --console-address :9001

The web console comes up at https://SERVER.pulsedmedia.com:9001 after you allow the port. Default credentials are the access key and secret you set in the run command.

Security checklist before you expose MinIO publicly:

- Change the access key and secret to long, randomly generated values. Default credentials let anyone read your backups.

- Restrict the listen interface to a VPN or known client IP if you don't need public access. The example above binds

9000and9001to all interfaces. - Set up TLS, either via Let's Encrypt or a self-signed cert. PBS will not accept HTTP S3 endpoints.

- Set the data directory permission to

700so only your user can read the bucket files at rest:chmod 700 /home/USERNAME/minio-data.

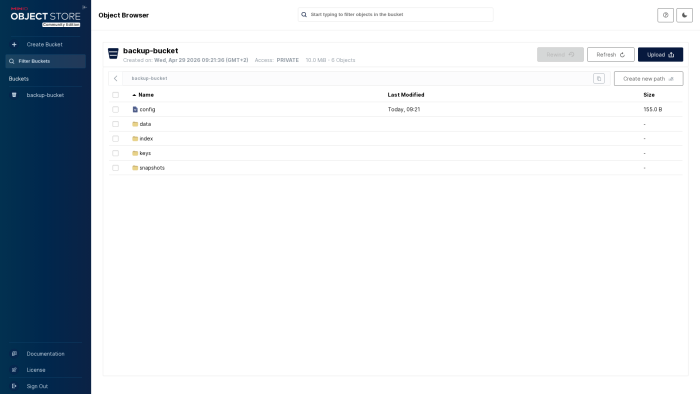

Once you've run a backup against the bucket you can drill in to verify the data is actually there. The screenshot below shows a Restic backup repository inside backup-bucket — config, data, index, keys, and snapshots are the standard Restic layout. PBS will produce a similar chunk structure once you point it at this bucket as an S3 datastore.

TLS is required on the storage box

PBS only speaks HTTPS to S3 endpoints. Plain HTTP will not work. Before you can point PBS at your storage box, the S3 server on the storage box needs a TLS certificate.

For rclone serve s3, generate a self-signed cert and pass it in:

openssl req -x509 -newkey rsa:2048 -days 365 -nodes \

-keyout /home/USERNAME/s3-key.pem \

-out /home/USERNAME/s3-cert.pem \

-subj "/CN=SERVER.pulsedmedia.com"

rclone serve s3 --addr 0.0.0.0:9000 \

--cert /home/USERNAME/s3-cert.pem \

--key /home/USERNAME/s3-key.pem \

--auth-key 'YOUR-ACCESS-KEY,YOUR-SECRET-KEY' \

/home/USERNAME/s3-bucket

For MinIO, mount certs at /root/.minio/certs/ inside the container:

mkdir -p /home/USERNAME/minio-certs

# Place public.crt and private.key in /home/USERNAME/minio-certs/

docker run -d --name minio --restart unless-stopped \

-p 9000:9000 -p 9001:9001 \

-e MINIO_ROOT_USER=YOUR-ACCESS-KEY \

-e MINIO_ROOT_PASSWORD=YOUR-SECRET-KEY \

-v /home/USERNAME/minio-data:/data \

-v /home/USERNAME/minio-certs:/root/.minio/certs \

minio/minio:latest server /data --console-address :9001

A proper certificate from Let's Encrypt is the better path if your storage box has a stable hostname. The self-signed cert path requires PBS to either trust the cert or run with --insecure flagging.

Configure PBS to use the S3 endpoint

The CLI form is the most reliable, since GUI labels shift between PBS versions. On the PBS server:

proxmox-backup-manager s3 endpoint create pm-storagebox \

--endpoint 'https://SERVER.pulsedmedia.com:9000' \

--region 'us-east-1' \

--access-key 'YOUR-ACCESS-KEY' \

--secret-key 'YOUR-SECRET-KEY' \

--path-style true

proxmox-backup-manager datastore create pm-offsite /var/cache/proxmox-backup-s3 \

--backend type=s3,client=pm-storagebox,bucket=backup-bucket

The same steps are available in the PBS web UI under Configuration → Remotes → S3 Endpoints and then Datastore → Add. Field names may differ slightly between PBS releases — the CLI commands above are the contract.

PBS still requires a local cache disk on the PBS host. Proxmox recommends 64 to 128 GiB. The cache holds metadata and recent chunks. The storage box holds the bulk.

Caveats

- The S3 backend is only in PBS 4.0 and newer. Earlier PBS won't see the option.

- PBS requires HTTPS. Plain HTTP endpoints are rejected. Set up TLS on the storage box first.

- If you use a self-signed cert, either install the cert as trusted on the PBS host or pass

--fingerprintwith the cert SHA256. - If your S3 hostname doesn't resolve over IPv6, make sure your PBS host can reach it over IPv4.

What we don't recommend

Don't run PBS itself on the storage box. PBS needs at least 2 GB of RAM, port 8007 open, and a root daemon. Storage box plans are sized for storage, not compute. Method 5 is the closer-to-PBS path; running the full server on a storage box isn't.

Don't use sshfs as a PBS datastore. PBS's chunk operations need atomic rename() and reliable atime updates. FUSE mounts break under load. PBS will appear to work for hours and then start losing chunks.

See also

- Storage Boxes — what storage boxes are and what they're built for

- Proxmox VE — how Pulsed Media uses Proxmox internally

- Rclone — full rclone reference

- Rclone SFTP remote mounting Seedbox — rclone with a seedbox

- Rootless Docker — required for the MinIO path

- Pulsed Media — company overview